Welcome to HECToRNews 1, November 2008

Featuring:

- Introduction from NAG's HECToR CSE Team

- All about HECToR Distributed Support

- Enhance your work on HECToR with expert training

- HECToR Hardware

- HECToR User Group meeting

- Code Issues

- HECToR Events

Introduction from NAG's HECToR CSE Team

Welcome to the first HECToRNews! The enewsletter intends to keep users updated with useful information on the national supercomputing service, including snippets on coding issues. It is created by HECToR's Computational Science and Engineering support (CSE) team, which is provided by a team of HPC specialists from the Numerical Algorithms Group (NAG). The CSE service exists to help HECToR's user community make optimum use of the supercomputer by providing assistance with porting, optimisation and scaling of applications together with training and web-based resources. The team consists of around 15 persons with 10 based at NAG's headquarters in Oxford and the remainder in NAG's Manchester office. Much of the HECToR CSE team provide core support dealing directly with user queries while others are directly involved in distributed CSE (dCSE) support placements.

We hope to make the newsletter as informative and relevant as possible. If you'd like to feedback with any comments or suggestions for future issues we'd be delighted to hear from you. You can call us on 01865 511245 or email us on [Email address deleted].

All about HECToR Distributed Support

This is also referred to as dCSE support and can be thought of as an extended support activity. Distributed support is available to provide help with:

- porting codes onto the HECToR system;

- improve performance of codes on HECToR;

- re-factoring codes to improve long-term maintainability;

- developing algorithmic improvements in the field of high-performance computing.

Contracts are currently awarded for durations from 6 months to 2 years. Projects are assessed via an independent panel review which is held at 4 funding calls per year. The award is to fund specialist help to improve your code to enhance its capability on HECToR. The specialist does not have to be from the HECToR team if there is someone else from within the application area that is particularly well suited, however, this is not a grant as the service is provided by NAG under contract from EPSRC. To be successful, there should be evidence that the effort is likely to achieve the goals i.e. that the general techniques are well proved and early tests indicate a strong likelihood of success. You can ask the core CSE service for help with code profiling to help improve the proposal. However, this proposal is for software development and not for scientific research.

The current application deadline for projects on HECToR is 15th December 2008. NAG's HECToR team are available to visit institutions to talk about this service. If you're interested in meeting with us, either call us 01865 511245 or email at [Email address deleted]

More information can be found on the HECToR website http://www.hector.ac.uk/support/cse/distributedcse/

Enhance your work on HECToR with expert training

The NAG HECToR Team give regular training sessions for users of HECToR and UK academics. Topics cover general HPC such as MPI and OpenMP programming and also more HECToR specific code optimisation techniques, code profiling and general working practises and have proven valuable to many who've already attended. Training sessions are held at NAG's offices in Oxford and Manchester, as well as on-site at Universities around the country. If you'd like to talk to us about having dedicated HECToR on-site training at your organisation email us at [Email address deleted].

The training courses are provided free of charge to HECToR users and UK academics whose work is covered by the remit of one of the participating research councils (EPSRC, NERC and BBSRC). Other people may attend on payment of a course fee. Specific training courses in the major application codes for Computational Chemistry and Engineering are also available see http://www.hector.ac.uk/support/cse/schedule/applcourses/ for details.

A calendar of forthcoming training sessions is available to view on the HECToR website here http://www.hector.ac.uk/support/cse/schedule/

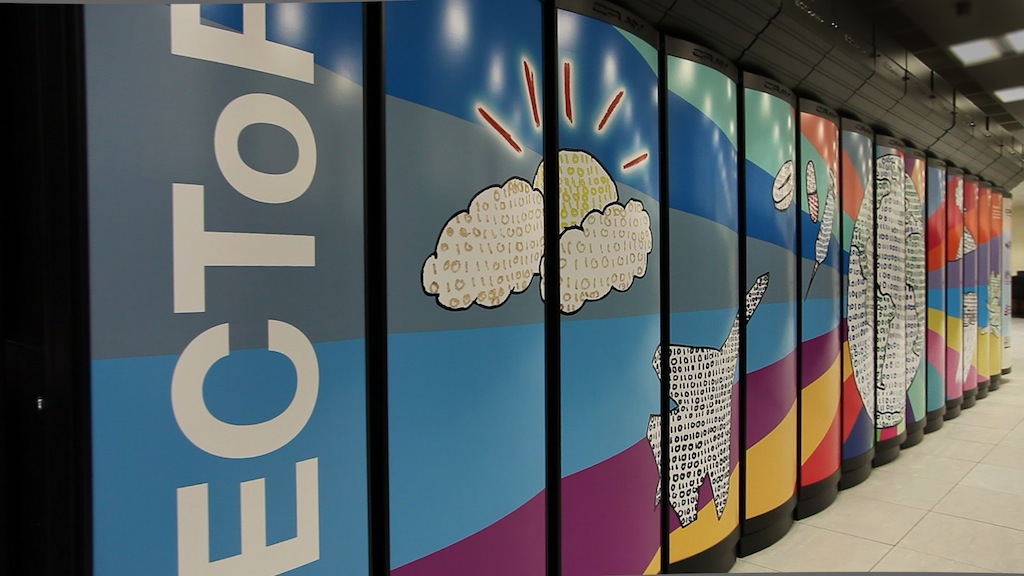

HECToR Hardware

In August 2008 a Cray X2 vector system was added to HECToR's existing XT4 system. It has 28 vector compute nodes, each has 4 Cray vector processors, making 112 processors in all. All processors are capable of 25.6 Gflops, giving a total peak performance of 2.87 Tflops and each 4-processor node shares 32 GB of memory. The interconnection network is based on a configurable fat-tree topology. The entire amount of the 1TB of main memory cannot be addressed across nodes using OpenMP, however it is possible to do this using SHMEM directives. This system is now available to use alongside the main distributed part of HECToR and training courses are running http://www.hector.ac.uk/support/cse/schedule/x2/ .

HECToR User Group 2008

The 2nd HECToR User Group meeting, or HUG for short, was held on the 23 September 2008 at the e-Science Institute in Edinburgh. The meeting began with a system update presented by EPCC and Cray. This was followed by HECToR user, Sylvain Laizet from Imperial College, discussing his work on modelling turbulence. Professor Peter Coveney of University College London then presented his cutting edge work on taking computational chemistry to the petascale and Professor John Harding from Sheffield University gave a talk on HPC modelling of Crystal nucleation and growth.

Further talks where enjoyed on dCSE projects including Fiona Reid of EPCC who has improved the performance of the NEMO Ocean modelling code and Phil Hasnip of York University presenting his work on improving the parallel performance of the molecular dynamics code CASTEP. The final session of the meeting was an open forum for users to voice their concerns to the appropriate HECToR management representatives.

Presentations and more details of the meeting can be downloaded from http://www.hector.ac.uk/support/cse/hug/

Code Issues

- Several users have reported CP2K issues with the Pathscale and GCC compilers on HECToR. Switching to PGI 7.2.2 will solve this problem.

- Out of memory errors are appearing to be common with NWChem for users with certain configurations. However, there is a new version of NWChem which uses portals and is more efficient and scalable, this should soon be available for the XT4...watch this space.

- A tip which may solve your MPI crash problem : Altering the following MPI environment variables in a jobscript export MPICH_MAX_SHORT_MSG_SIZE=128K export MPICH_UNEX_BUFFER_SIZE=60M may solve this. It is a common problem that usually occurs when a code which ran successfully on another system crashes on HECToR.

- Another very useful tip was kindly submitted by Keith Refson of RAL. Always make sure that the first line of your PBS script contains a shell interpreter, e.g. #!/bin/bash. Leaving this out may cause basic error messages (such as reading past the EOF,trying to access out of array bounds) to be suppressed from your output file.

- And finally, please remember that for checking that your Fortran code meets all the

correct Fortran coding standards the NAG Fortran compiler is a very useful tool. Use with

ftn from the default login with:

module swap PrgEnv-pgi PrgEnv-gnu

module load Nag-f95For the full documentation please see http://www.nag.co.uk/nagware/np/doc_index.asp

Additionally,

module load Nag-ftools

nag_tools

will invoke the NAG Fortran Tools consisting of a GUI for transforming and analysing Fortran 77 and Fortran 90/95 code.NAG's Numerical Libraries for HECToR Users

The NAG Fortran(xt-libnagfl), Parallel(xt-libnagfd) and Shared(xt-libnagfs) numerical libraries are also available on HECToR and may be used by loading these appropriate modules.

More information is available from:

http://www.nag.co.uk/numeric/FL/manual/html/FLlibrarymanual.asp

http://www.nag.co.uk/numeric/FD/manual/html/FDlibrarymanual.asp

http://www.nag.co.uk/numeric/FL/manual/html/FSlibrarymanual.asp

HECToR Events

The HECToR National Supercomputing Service and the Research Community Seminar 7 November 2008, University of Manchester

Jon Gibson of NAG will discuss the HECToR service and what it offers the UK research community. As well as describing the hardware, he will explain the application process and the support and training available to users. He will provide specific examples of how NAG, who provide the Computational Science and Engineering (CSE) support for HECToR, work with HECToR's users to improve the performance of their codes. He will focus particularly on the project "Cloud and Aerosol Research on Massively-Parallel Architectures", which he is currently working on.

The abstract and information about viewing the seminar over the Access Grid can be found at http://www.rcs.manchester.ac.uk/research/seminars/