Next: Sharing large data sets Up: Introduction Previous: Diffusion Monte Carlo (DMC) Contents

CASINO is a QMC software package developed and maintained over the last 10 years at Cavendish Laboratory, Cambridge University, UK [2,3].

In its core CASINO's QMC algorithm uses configurations of ![]() three

dimensional random walkers (RW) which evolve according to the

distribution probability associated to the trial wavefunction for the VMC

calculation, Eq (

three

dimensional random walkers (RW) which evolve according to the

distribution probability associated to the trial wavefunction for the VMC

calculation, Eq (![[*]](crossref.png) ), or to the Schrodinger equation for the DMC

calculation, Eq (

), or to the Schrodinger equation for the DMC

calculation, Eq (![[*]](crossref.png) ).

).

A typical calculation involves the following main steps:

Two main factors determine CASINO's performance for the function of the number of electrons in a given model: i)the memory needed to store orbitals values for the Monte Carlo algorithm and ii) the scaling of the computation time with the electron number [2].

Besides these problems it was found in practice that for parallel computation of models containing more than 1000 electrons and for computations with or on more that 5000 tasks there is an extremely long reading time of the orbitals' data or of the previously stored configurations (30 to 60 minutes).

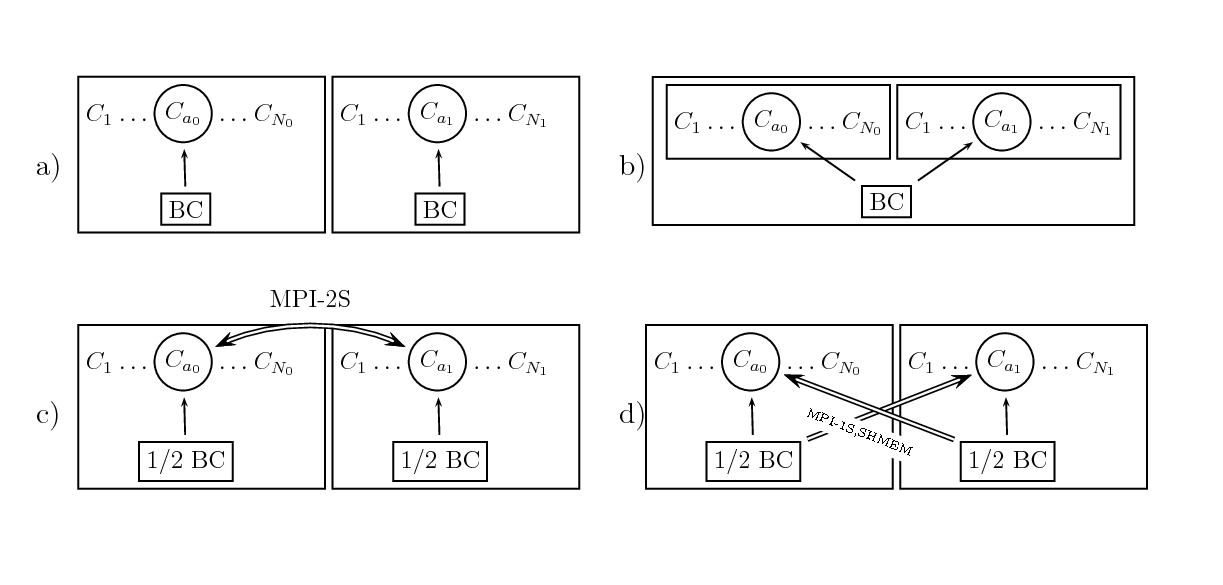

In the following sections of this dCSE report we shall present in detail the

proposed solutions for the above problems and discuss the performance

gains they bring to the code. Section ![[*]](crossref.png) describes the

algorithms used for shared OPO data and the results of the performance

measurements for each algorithm. Sections

describes the

algorithms used for shared OPO data and the results of the performance

measurements for each algorithm. Sections ![[*]](crossref.png) ,

,

![[*]](crossref.png) presents the second level parallelism algorithms and

their performance is analysed. Section

presents the second level parallelism algorithms and

their performance is analysed. Section ![[*]](crossref.png) presents the

improvements for the reading of initial data.

The benchmark tests were done on the dual core AMD Opteron which were

used in phase I of HECToR and on the quad core AMD Opteron that are currently in

use in phase II of HECToR. The dual core processor has the following

technical specifications: 2.8 GHz clock rate, 6 GB of RAM, 64KB L1

cache, 1MB L2 cache, peak performance 11.2 Gflops in double precision.

The quad core processor has the following technical specifications: 2.3

GHz clock rate, 8 GB of RAM, 64 L1 cache, 512 KB L2 cache, 2 MB L3

cache(shared), peak performance close to 40 Gflops in double

precision. The code was compiled with PGI v8.0.{2,6}.

presents the

improvements for the reading of initial data.

The benchmark tests were done on the dual core AMD Opteron which were

used in phase I of HECToR and on the quad core AMD Opteron that are currently in

use in phase II of HECToR. The dual core processor has the following

technical specifications: 2.8 GHz clock rate, 6 GB of RAM, 64KB L1

cache, 1MB L2 cache, peak performance 11.2 Gflops in double precision.

The quad core processor has the following technical specifications: 2.3

GHz clock rate, 8 GB of RAM, 64 L1 cache, 512 KB L2 cache, 2 MB L3

cache(shared), peak performance close to 40 Gflops in double

precision. The code was compiled with PGI v8.0.{2,6}.

|